Supported Large Language Models (LLMs) in OCI Generative AI Service

Oracle Cloud Infrastructure (OCI) Generative AI offers great support for LLMs including – Cohere (Command series), Meta’s Llama 2 – enabling users to leverage its advantages within the platform for different text generation and processing tasks. These models extend their support to multilingual capabilities across over 100 languages.

| LLM | Features |

|---|---|

| Command model by Cohere | Model Parameters: 52 billion Context Window: 4096 tokens Usage: Text Generation, Chat, Text Summarization |

| Command Light Model by Cohere | Model Parameters: 6billion Context Window: 4096 tokens Usage: Text Generation, Chat, Text Summarization Smaller, faster version of command model |

| Llama2 70b chat Model by Meta | Model Parameters: 70 billion Context Window: 4096 tokens Usage: Text Generation, Chat |

The other supporting LLMs models include Embedding model, Embed English, and Embed English Lite by Cohere.

Summarization Models

Summarization model can be used from the command model of Text Generation with some special parameters as described below:

- Temperature:Determines how creative the model should be. Default value is set to 1 and Max value is 5

- Length:Approximate length of the Summary. Choose the length from the options available like Short, Medium or Long.

- Format:How to display the result whether in a free form paragraph or in bullet points.

- Extractiveness: How much to reuse the provided input in the summarization

Embedding Models

Embedding models will convert the text into an embedding and store it as a vector in a vector database.

Customizing Pre-trained Models or LLMs

There are different ways to customize the pre-trained model or the LLM to get the relevant response to the prompt given by the user. The below are some of the methods used to customize LLM:

- Prompt Engineering:Prompt Engineering is one of the ways to customize the LLM by different means like In Context Learning, Few Shot Prompting, Chain of thought etc.

- Fine Tuning:Fine tuning is used to optimize the model on a smaller domain specific dataset. Recommended when the pretrained model does not perform well or you want to teach something new.

- Retrieval Augmented Generation (RAG):This would enable LLM to connect with the Knowledge base like (databases, wikis, vector databases etc) which would help in providing the grounded responses.

- Custom Model in OCI Generative AI:A pre-trained Large Language model used as base and fine-tuned with custom data to create a custom model. OCI Generative AI need a dedicated AI Cluster of type fine tuning to fine tune the model

- Model Endpoint in OCI Generative AI:A designated point in dedicated AI Cluster where a large language model can accept user request and send the response such as model’s generated text. This needs a dedicated AI Cluster of type Hosting where the endpoint is created.

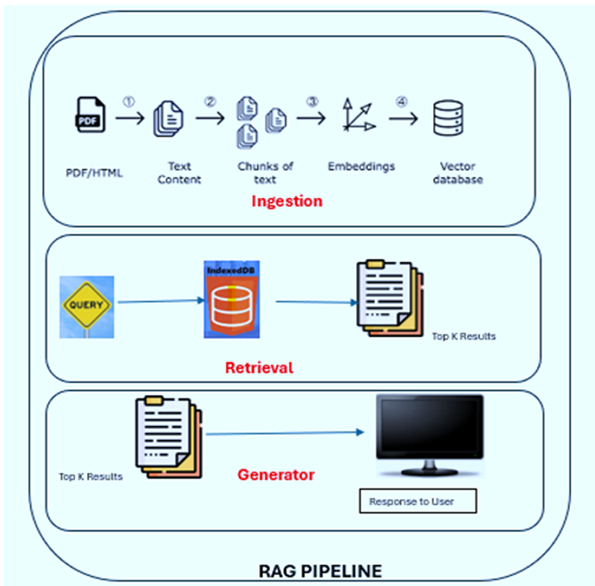

RAG Pipeline

RAG Pipeline consists of 3 steps as described in the below diagram:

Step1: Ingestion – where the data is being loaded into the vector database

Step2: Retrieval – this will search for the relevant documents.

Step 3: Generator – it will generate the human like text and responds to the user.

Lang Chain

Lang Chain is a framework for developing applications powered by language models. It offers a multitude of components that help us build LLM powered applications.

Lang Chain Components

Lang Chain components are used to build a Lang Chain application. The below are the components.

- LLMs

- Prompts

- Memory

- Chains

- Vector Stores

- Document Loaders

- After input but before chain execution (Read from memory)

- After core logic but before output (Write to memory)

- There are two types of memories available in LangChain – Conversation Buffer Memory and Conversation Summary Memory

- LangChain memory uses Streamlit to manage the memory for every user session.

As we set our roadmap to innovate around OCI Generative AI touchpoints, we have set the stage for curious technocrats to understand each of these terms and components. Our research focuses primarily on Generative AI inside cloud environments to boast user experience for the applications you develop. The fully managed OCI infrastructure comes with many Generative AI features that promote 360-degree business growth, meeting business objectives and your needs.

Generative AI isn’t just an integral part of your cloud infrastructure; it’s a strategic imperative where the capabilities and features become a game-changer for your business.